|

It can update existing mirrored sites and resume interrupted downloads.

HTTrack Website Copier can download a website from the internet to a local directory, building recursively all directories and getting all files from the server. It also has a webcopier portable version that can run from a USB flash drive. HTTrack Website Copier is another free and open source offline browser tool for Windows devices. No scheduling or bookmark importing features No support for JavaScript parsing or dynamic websites Supports file filtering and rule configuration Cyotek WebCopy also has features like filtering files, configuring rules, viewing reports, and more. It can remap links to resources to match the local path.

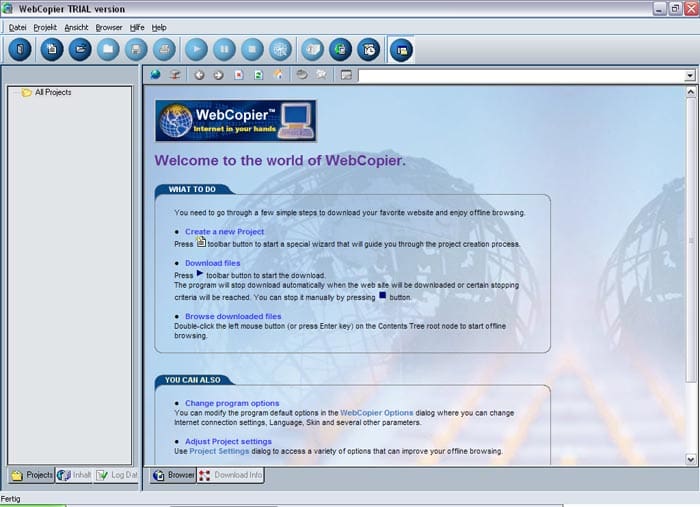

Cyotek WebCopy can scan a website and download its content, including HTML, images, videos, and other files. It has a webcopier portable version that can run from a USB flash drive. No support for dynamic websites that use server-side scriptingĬyotek WebCopy is a free and easy-to-use offline browser tool for Windows devices. Not free (costs $49.95 for Windows version and $39.95 for Mac version) Supports JavaScript, Java, and Flash filesĬan print entire websites or parts of themĬan import bookmarks from Internet Explorer and Netscape WebCopier also has features like scheduling downloads, importing bookmarks, using templates, and putting downloaded files on a CD. It can download up to 100 files simultaneously, and it supports proxy servers and secure websites.

WebCopier can copy entire websites or specific directories, and it can follow links precisely, including JavaScript parsing. It is available for both Windows and Mac devices, and it has a webcopier portable version that can run from a USB flash drive. WebCopier is one of the most popular and powerful offline browser tools on the market. We will compare their features, pros, and cons, and help you choose the best one for your needs. We will focus on webcopier portable tools, which are tools that can run without installation and can be carried on a USB flash drive or other removable media. Just tell us what data you need.In this article, we will review some of the best offline browser tools available for Windows and Mac devices. Web data partners like Zyte can take care of all the hassles of web scraping. Get web data directlyįor businesses, it makes sense to not worry about crawling and scraping so you can focus purely on the insights from that data. Depending on the business use case, these can be product name or product price, or some text or other information from any type of website. The URL can be one, but when you scrape, you extract the data not necessarily for the URL but for other data fields that are displayed on the website. With web crawling the output is a lot more simple because it's just a list of URLs - you can have other fields as well but the main elements are the URLs.Īnd with web scraping, you usually have a lot more fields - 5-10-20 or more data fields. Or maybe the URL needs to contain some kind of keyword for example and you collect all those URLs - and then you create a scraper that extracts predefined data fields from those pages. So first you create a crawler that will output all the page URLs that you care about - it can be pages in a specific category on the site or in specific parts of the website. For example, search engines crawl the web so they can index pages and display them in the search results.īut another data crawling example would be when you have one website that you want to extract data from - in this case you know the domain - but you don't have the page URLs of that specific website. So that you can do something with them later. And this is the reason you crawl: you want to find the URLs. With crawling, you probably don't know the specific URLs and you probably don't know the domains either. With scraping you usually know the target websites, you may not know the specific page URLs, but you know the domains at least. Web scraping is all about the data - the data fields you want to extract from specific websites. Going deeper, there's a big difference in the purpose of these two things and how they work. This means you extract data and do something with it, like storing it in a database or further processing it. So you first crawl - or discover - the URLs, download the HTML files, and then scrape the data from those files. Usually, in web data extraction projects, you need to combine crawling and scraping. While crawling is about finding or discovering URLs or links on the web. The short answer is that web scraping is about extracting data from one or more websites.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed